Note: This is a rewritten version of this post from a year ago.

We’ve long been suspicious of church metrics and of the increased reliance on quantitative measurement in the UMC here at HackingChristianity. As the supervisory distance increases due to fewer district superintendents and larger districts, the church leadership is by necessity choosing to emphasize comparative metrics. While helpful for accountability for what they are measuring, are there ways to show the metrics system is flawed so that what the church is measuring doesn’t translate to what the church leadership really values?

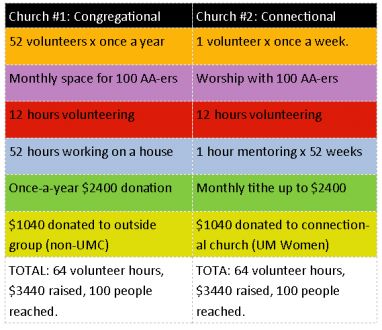

I recently found a chart to explain what I mean. A year ago at a district meeting of churches in the Portland area, the clergy were given the following chart to gauge our outreach efforts and to help with planning. I’ve spruced it up and made it more clear, but it’s from The Externally Focused Quest: Becoming the Best Church for the Community by Swanson & Rusaw. You can view the original chart here in Google Books but here’s my version:

Basically the idea is that you place a dot or something in each square to indicate where your church has a program or person or offering regarding outreach and its frequency. Then you evaluate. Are there a ton of events that happen yearly and raise money (or donate materials ie. “things”)? Are there more programs that happen weekly and have lots of volunteers? You can see how this splits our outreach into categories to see where the needs are.

The authors of the chart outline that there are two movements that make a church more vital over time:

- Movement vertically (from money to projects to people): our hearts grow stronger for outreach the closer we get to people in need, so movement from giving money during a church service to committing to a project to working face-to-face with people “grows” the heart and allows God to work more clearly in it.

- Movement horizontally (from yearly to quarterly to monthly to weekly): for our members advanced in age or decreased in ability, their only viable option may be to open their wallets more deeply as they contribute to the church financially more often. Likewise, some members may not have the money to give, but can volunteer more often as their schedules allow. Both are indicative of growth in affection towards outreach.

Thus a church that is growing in a heart for missions would have a good balance across the chart, though if it were weighted, more dots in weekly personal outreach is the strongest.

So this is a great chart. But it’s not only great in a utilitarian way for your congregation (feel free to borrow it!), but also to illuminate the problem with church metrics in the United Methodist Church as it stands.

A Tale of Two Churches (Or only “One” to the UMC)

I have an example chart here. What I did is I filled it in with TWO different churches that have filled in the same reporting number (dollars, people, people served) with very different actions. So you would compare the two orange boxes. Or compare the two reds. Check out the chart and we’ll outline it below.

You can see how the activities are different but both involve outreach. But here’s the confounding piece:

BOTH churches would report the exact same number to the church metrics website.

For example, One church could (following the blue boxes) have one individual spending 52 hours once a year on a VIM trip. The other church could mentor one different child one hour a week and spend 52 hours in outreach. They would be quantitatively the same (52 hours reported at the end of the year) but qualitatively different (one served one family’s house, the other served 52 children on a regular basis).

Is one better than the other? Not really. But when you start to add up the two comparisons together, look at what you get:

For example, Detroit churches (I picked them solely because theirs was high-up on a google search!) have this page outlining what would and what would not be counted in the vital signs dashboards. I went through and categorized the above based on that page’s outlines. YES, donations to Lifewatch and donations to UMW would be counted in the same category (not kidding!). And look at the two very different churches that would report the same “metrics.”

- Church #1 does a yearly event for homeless, provides space for AA groups, volunteers for Beth Moore’s ministries, goes on a yearly mission trip, has once-a-year giving families and external donations go quarterly to a non-United Methodist groups. They support non-UMC events and see their mission as an exchange interface. They are on the way towards congregationalism.

- Church #2 does weekly job training with the homeless, involves itself with quarterly worship services for dependency groups, volunteers at an after-school ministry, and mentors children weekly. Their donations go to United Methodist groups and their families give less but more frequently. They support UMC events and see their mission as an interaction interface on a regular basis in this strongly connectional church.

Quantitatively, they would report identical reports to the United Methodist powers-that-be. But I would dare you to find anyone who would say that church #1 is more vital than church #2. The same numbers generated do not replace the picture of a church more involved on a deeper level and with a budget more spread-out than dependent on a few kingmakers in the congregation.

The Mission of the United Methodist Church is to make disciples of Jesus Christ for the transformation of the world. It seems that the things we should be counting should be about transformation, not accumulation. And the way church metrics are currently set up, we are doing the opposite.

Your turn

Joey Reed linked to the original article last year and includes this commentary:

Expecting diagnostic metrics to fix things is like treating a fever with a thermometer. As it turns out, there’s more to waking up the churches than simply counting the sheep — something we knew all along, but couldn’t quantify. Indeed, we were barely able to articulate the alternatives to jotting down our numbers and struggling to find their meaning.

So, your turn!

- How can “alternative” metrics like this chart impact your ministry context?

- In what ways can we better evaluate quantitative and qualitative data together?

- How can increased supervisory distance be bettered (not replaced) by church metrics?

Thanks for your comments!

You nailed this one and I like the fever analogy. I was at a church that did more for it’s community than any other church in town, and reached a population that the UM church normally doesn’t reach. The apportionment system is just too heavy a tax on that kind of missionary church and because of that the DS hates that church and does everything to subvert it’s ministry. BTW the church was in an area where there were many other denominational churches, Baptist, Presbyterian, Disciples of Christ, & Church of Christ all within a few blocks. The United Methodist Church is the only one still open and doing ministry in that area. All the others have relocated or closed. Hardly unsuccessful.

And yet perhaps quantitatively unsuccessful! Great example!

I tell people at audit every year that behind every figure, number, or dollar they put on a piece of paper there is a name, a mission, a ministry. Metrics are only good insomuch that they track something else. If you turn in a worship attendance of 75 – who are those 75 people? Why do they come to your church? How were their lives changed? If you turn in that your church brought in $100,000 dollars this year – where did it come from? Who sacrificed to make ministry possible? If you spent $90,000 dollars this year – where did it go? As the old saying goes if someone looked at the churches checkbook what could they tell about our values and mission?

Numbers tell a story, but they don’t tell the whole story. I track my churches metrics, but I know the people behind those metrics.

Jeremy,

Just out of curiosity, who knows about the numbers that are reported? I turn in my numbers and know that they get turned into the Conference. I know that other numbers are turned in but I never see a report.

Is the congregation supposed to get a report on the numbers submitted and what they mean?

Thanks!

By the way, I am laity

“The government are very keen on amassing statistics. They collect them, add them, raise them to the nth power, take the cube root and prepare wonderful diagrams. But you must never forget that every one of these figures comes in the first instance from the village watchman, who just puts down what he damn pleases.”

— http://en.wikipedia.org/wiki/Josiah_Stamp,_1st_Baron_Stamp